The AI Technical Debt Crisis Nobody Warned You About, And How to Fix It

Here is a scenario playing out in engineering teams right now. A company spent the last two years integrating AI into its stack. Copilot for code. An LLM-powered support bot. A few generative features were shipped to production. Leadership is happy, the AI adoption budget got spent, features went live, and the press release wrote itself.

Then the cracks appear. Developers spend more time debugging AI-generated code than writing new features. The support bot gives inconsistent answers. No one is sure which version of which AI model is running where. A simple change to one system breaks three others. Onboarding a new engineer takes weeks because nobody fully understands the codebase anymore.

This is AI technical debt, and it’s accumulating silently inside organizations that are measuring AI adoption without controlling what AI adoption costs them structurally.

The rush to adopt has created a new class of liability. And unlike the technical debt of a decade ago, this one compounds fast.

1. What Is AI Technical Debt, And Why Is It Different?

Technical debt has existed since the first developer chose speed over structure. It’s the cost of doing things the fast way now, knowing you’ll pay more to fix it later. Traditionally, it built up slowly: a skipped code review here, an aging library there, a database schema that made sense in 2018 but doesn’t today.

AI technical debt is the same problem running on a much faster clock. AI tools, code generators, LLM integrations, and model-powered features produce output at a pace humans can’t review, test, or govern in real time. The result is debt that doesn’t accumulate gradually, but it compounds.

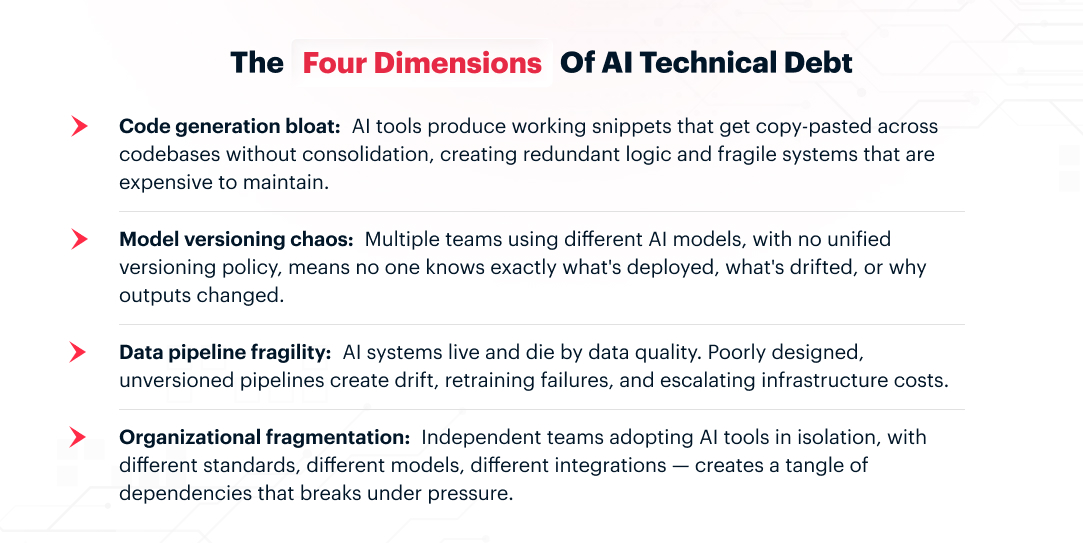

There are four dimensions of AI technical debt that traditional frameworks simply weren’t built to catch:

What makes this especially dangerous is that it’s invisible until it isn’t. The code runs. The model responds. The feature ships. The debt hides in the spaces between: the undocumented decision, the unversioned model, the pipeline nobody owns. It only surfaces when something breaks in a way that’s very hard to trace back.

2. The Scale of the Problem, in Number

This isn’t a niche engineering concern. It’s a board-level business risk, and the data confirms it.

$2.41T Annual cost of technical debt in the U.S. alone.

75% Of tech leaders will face moderate to severe AI technical debt by 2026

20–40% Of the development time consumed by unmanaged tech debt, diverting resources from innovation

The IBM Institute for Business Value found something particularly striking: enterprises that account for tech debt in their AI business cases project 29% higher ROI from AI than those that ignore it.

And those who ignore it, returns can drop by 18–29%. For a $20 billion enterprise, unaddressed AI technical debt can quietly add over $120 million per year in hidden implementation costs.

Accenture’s research across 1,500 global companies found that AI and generative AI tools are now the highest contributors to new technical debt, on par with legacy enterprise applications. The same tools intended to accelerate innovation are also the fastest-growing source of structural liability.

3. How AI Tools Are Creating Debt Faster Than Teams Can Manage It

The Code Generation Problem

GitHub Copilot, Cursor, and similar tools now generate over 35% of developers’ code in commonly used languages. That sounds like a productivity win, and in the short term, it often is. But speed without structure is borrowing against the future.

A 2025 report by Ox Security analyzed 300 open-source projects, including 50 that were fully or partially AI-generated. The finding was direct: AI-generated code is highly functional but systematically lacking in architectural judgment.

It produces working logic, but often without considering modularity, security patterns, domain-specific constraints, or how the code fits into the system it’s joining.

The Paradox Nobody Talks About

Here’s the part that makes this genuinely difficult to solve: the same AI tools generating the debt are also the best tools available for fixing it.

When used with discipline and governance, AI can read legacy codebases, identify debt patterns, assist with refactoring, and compress the time needed for modernization.

It’s a core reason why our AI development practice focuses on governance architecture alongside feature delivery, not just shipping AI, but building it in a way that doesn’t require constant firefighting six months later.

4. The Warning Signs: Does Your Business Have AI Technical Debt?

AI technical debt rarely comes forward by itself. It shows up in symptoms that get blamed on other things: team inefficiency, scope creep, difficult-to-hire-for codebases, and AI features that underperform after launch. Here are the signals worth paying attention to.

Your AI pilots never reach production at scale

A prototype gets built. It works in demo conditions. Then it quietly never ships, or ships to a fraction of the intended users before being shelved. This pattern usually means the underlying infrastructure wasn’t ready for the AI layer being placed on top of it. The debt was in the foundation, not the feature.

Your developers are maintaining more than building

Research shows that approximately 40% of developers already spend two to five working days per month on debugging, refactoring, and maintenance caused by technical debt. If that ratio is worsening in your team, it’s a direct signal that the interest on your debt is consuming your innovation budget.

Nobody can answer: “Which model is running where?”

If your answer to that question is a long pause followed by a meeting request, you have model versioning chaos. Different teams using different models with no unified policy means outputs are inconsistent, debugging is guesswork, and compliance risk is growing by the day.

New engineers struggle to understand your systems

This is one of the clearest lagging indicators. When AI-generated code accumulates without documentation or refactoring, codebases become vague. The engineers who built it can navigate it. Everyone else is lost. This is not a people problem; it’s a debt problem wearing the costume of a talent problem.

Your AI infrastructure costs are rising, but business outcomes aren’t

Escalating cloud and compute costs without proportional business impact is often a sign of duplicate models, redundant data pipelines, and unoptimized AI workloads, all classic outputs of fragmented, ungoverned AI adoption across teams.

5. The Hesitation Trap: Doing Nothing Also Creates Debt

It would be easy to read the above and conclude that the safest path is to slow down AI adoption. That instinct is understandable. It’s also a debt-creating strategy in its own right.

Research from Node Magazine puts the paradox plainly: organizations are either rushing into AI pilots that create immediate technical debt, or avoiding AI entirely and creating forward-looking debt through inaction. Both paths lead to the same destination: systems that can’t support the future of work.

Each quarter spent in extended governance reviews or limited pilots doesn’t preserve optionality indefinitely. It increases the distance between your operating model and where AI-native competitors are already building. The debt from inaction is slower to surface but just as real when it does.

6. A Practical Operating Framework to Build and Ship AI Without the Debt

Most teams that accumulate AI technical debt don’t do it carelessly. They do it without a shared operating structure, so each decision gets made in isolation, each team applies different standards, and the debt compounds in the gaps between them.

The framework below is what we use at LN Webworks when working with engineering teams on AI development and integration. It isn’t a methodology document. It’s a practical operating flow that provides teams with a shared approach to define the problem, assign ownership, validate quality, release safely, and improve after launch. You can see it applied across our client case studies.

The operating flow is: Product job → System contract → Ownership → Evaluation → Release gates → Monitoring → Iteration.

Step 1: Start With the Product Job, Not the Model

The single most common source of AI debt is starting with the technology instead of the task. Teams spin up models, select tools, and build integrations before anyone has clearly defined what the system must actually do, and for whom.

Before architecture, prompting, or model selection, define four things: the user goal (what the user is trying to accomplish), the system task (what the AI-enabled system must do to help), the business value (why this capability matters), and the risk level (what goes wrong if the output is wrong).

A vague starting point, “we need an AI assistant,” produces vague architecture that accrues debt from day one. A specific one: “We need to help support agents draft accurate, policy-aligned replies in under 30 seconds” gives the team something real to design against.

Step 2: Write a System Contract Before a Single Line of Code

Before anyone builds anything, the whole team needs to agree on what the AI feature is actually supposed to do, what it receives, what it returns, and what happens when it gets something wrong. That means defining a shared contract before implementation begins, so everyone builds to the same boundary.

The contract should specify:

- Inputs (what data the system receives)

- Outputs (what it must return)

- Output schema (required structure)

- Constraints (latency, privacy, cost, reliability)

- Failure modes (how the system can fail)

- Fallbacks (what happens when confidence is low, context is missing, or output is invalid).

A concrete example: input is a customer question, account status, and product documentation; output is an answer with citations, a confidence score, and an escalation flag; the fallback routes to human support when the answer is unsupported.

This is the boundary that keeps the system coherent. Software engineers build against it. AI engineers optimize within it.

Step 3: Split Ownership by Function with Shared Accountability at Handoffs

One of the clearest structural causes of AI debt is ownership confusion; nobody is sure who is responsible for what, so things fall through the gaps between teams.

Software engineering typically owns application architecture, APIs and integrations, authentication, workflow orchestration, observability, infrastructure, and fallback logic.

AI engineering typically owns model selection, prompting or fine-tuning, retrieval quality, evaluation design, safety behavior, and drift analysis.

Both functions share ownership of system requirements, output schema, acceptance thresholds, release readiness, and post-launch improvement priorities.

This split avoids two common failures: software teams pretending the model is deterministic, and AI teams behaving as if they are building in isolation.

Step 4: Choose the Right Tool for the Job, Then Evaluate Before You Ship It

Not every problem needs an LLM, and reaching for one when a simpler tool would work is itself a form of debt. Use rules when outputs must be deterministic. Use traditional ML for structured prediction with labeled data.

Use LLMs when language, ambiguity, or retrieval is involved. Use hybrid systems when workflow logic needs to stay controlled, but language handling benefits from AI. The decision should be explicit and documented, not assumed.

Step 5: Evaluate Before Release, Against Real Scenarios, Not Just Demos

Demos and instinct cannot judge AI quality. Before any release, measure task success, groundedness, safety, latency, cost, and stability against real use cases, edge cases, and failure cases.

Both teams agree on pass-fail thresholds in advance. If it misses the threshold, it is not ready. That’s not a delay; it’s the thing that prevents the post-launch firefighting that generates debt fastest.

Step 6: Version Prompts and Configuration, like Code, and Tie It To Your Release Process

Every change to a prompt, a model setting, or a retrieval parameter is a change to how your system behaves. If those changes aren’t tracked, reviewed, and reversible, then when something breaks, and it will, nobody can tell you what changed or when.

This isn’t a separate discipline from your release process. It’s part of it. Treat versioning as the thing that makes your release gate meaningful: you can only know whether a release is safe to push if you know exactly what changed from the last one.

When behavior degrades unexpectedly in production, the teams that recover fastest are the ones who can point to a diff and say, there, that’s what changed.

Step 7: Require Structured Outputs, Monitor Production Behavior, and Feed it Back in Iterations

These three belong together because they form the ongoing discipline that keeps the operating flow running after launch, monitoring, and iteration in the flow. Downstream systems should depend on schema, not wording. Structured fields like answer, citations, confidence score, and escalation flag give engineers something stable to build against, regardless of how the model’s outputs evolve. In production, track response quality over time, retrieval misses, user corrections, and escalation rates, not just uptime

IDC research details that unmanaged AI systems degrade quietly, and by the time users feel it, the debt has compounded. Then feed production signals, edited responses, abandoned flows, and failure examples back into evaluation sets and prompt refinement. That feedback loop is what separates teams that ship AI once from teams that compound its value over time.

7. What This Means for Your Platform Architecture

AI technical debt doesn’t live only in the code. It lives in your platform architecture — the decisions made when integrating AI into your web platform, your product, and your customer-facing systems.

This is where the consequences become most visible to the business: slower feature releases, inconsistent user experiences, and AI-powered features that underperform at scale.

At LN Webworks, we’ve been incorporating AI-readiness assessments into our platform audits, not just performance and accessibility checks, but structural evaluations of how well a platform can support AI integrations without creating compounding fragility.

For a sense of how this applies to common platform issues, our guide to critical website performance issues covers the technical foundations that underpin AI-ready architecture.

The enterprises managing AI technical debt well aren’t doing it by slowing down. They’re doing it by treating AI-readiness as infrastructure, the same way they treat security or scalability. Not a feature you build once, but a property you maintain continuously.

Closing Thoughts

The businesses winning with AI right now are not the ones that adopted it most aggressively. They’re the ones who adopted it most deliberately. They moved fast where it mattered and built clean where it counted. They treated the governance and structural work not as a tax on innovation, but as the investment that makes innovation compoundable.

The window to get ahead of AI technical debt, rather than spend years catching up to it, is still open. But it’s narrowing. Every quarter of ungoverned AI adoption widens the gap between your systems and where they need to be. Every quarter of disciplined adoption closes it.

If this raised questions about where your platform stands at the moment, that’s a conversation worth having. It’s a much shorter and less expensive conversation now than it will be in 18 months.