How to Use AI in Development with a Maintainable Codebase?

Your team ships faster than ever. Yet six months from now, nobody can explain what half the code does.

You know that moment when a new engineer joins your team, opens the repo, and just… stares? Not because the code is complex in a good way. Because nothing follows the same patterns. Because half the modules look like they were written by different people with different opinions about how error handling should work.

That’s happening at many organizations right now. And increasingly, the “different people” were actually the same AI tool, responding to different prompts on different days.

This guide is for organizations that love what AI coding tools do for speed but are starting to worry about what they are doing to the codebase.

We are not here to tell you to stop using them; we use them every day at LN Webworks. We are here to share what actually works to keep things clean while you do.

The Speed Trap: What the Data Actually Shows

First, let’s acknowledge reality: AI coding tools aren’t going anywhere. Stack Overflow’s latest developer survey says 65% of developers use them weekly. GitHub reports 46% of new code on its platform is now AI-generated. This is just how software gets built in 2026.

The problem isn’t the tools. It’s what’s happening to codebases on the other side of all that velocity.

1.7× more issues per AI-generated pull request vs. human-written.

4× increase in code duplication.

63% of devs spent more time debugging AI code than writing it.

That 1.7× number comes from CodeRabbit’s analysis of 470 GitHub repositories, and it’s not just about more bugs. The bugs are worse. AI-authored pull requests carry 1.4× more critical issues and significantly higher rates of security vulnerabilities, such as XSS and insecure password handling. The kind of stuff that shows up in pen test reports.

Meanwhile, MIT Technology Review reported something even more telling from GitClear’s analysis of 153 million lines of code: for the first time, developers are pasting code more often than they’re refactoring it. The most basic hygiene practice in software maintenance, reorganizing code so it stays coherent, is in decline.

Also Read: The AI Technical Debt Crisis Nobody Warned You About, And How to Fix It

Why AI-Generated Code Degrades Over Time?

Before we get to solutions, it’s worth understanding why this keeps happening, because the failure mode isn’t random. It’s surprisingly predictable once you see the pattern.

The Convention Blindness Problem

Every mature codebase has conventions, naming patterns, architectural decisions, module boundaries, and error-handling philosophies that aren’t documented anywhere. They live in the team’s shared understanding. You know them because you’ve worked in the repo for months. The AI doesn’t know them because it’s working from a prompt rather than context.

Bill Harding, CEO of GitClear, nailed this in an interview with MIT Technology Review: AI tools have an overwhelming tendency to ignore existing repository conventions and instead generate their own slightly different approach to solving each problem.

Do that fifty times, and you’ve got a codebase that looks like it was assembled by a rotating cast of freelancers who never spoke to each other.

The Local Optimization Trap

Here’s the thing about AI tools: they’re brilliant at solving the problem right in front of them and completely blind to everything else. Each response might work perfectly in isolation. Run the test, it passes. Ship it.

But software isn’t isolated; it’s hundreds of interconnected modules where a change in one area cascades into others. When AI solves each problem independently, without awareness of how its solution affects the broader architecture, you end up with code that passes today’s tests but makes tomorrow’s changes harder. And this compounds silently.

Each AI-generated feature adds a tiny bit of friction. None of it is visible until someone tries to make a cross-cutting change and discovers that nothing fits together the way it should.

The Review Bottleneck

And here’s where the organizational challenge meets the technical one. Your AI tools produce code faster than your team can review it.

That’s not a criticism of your team, it’s just math. The estimated quality deficit for 2026 (the gap between code generated and code properly reviewed) sits at roughly 40%.

Pull requests per developer are up 20% with AI assistance, but incidents per pull request are up 23.5%.

The review step, the one place where architectural coherence and convention-compliance actually get enforced, is being outrun by the firehose of code coming through the pipeline.

The Vibe Coding Reckoning

We can’t talk about AI-assisted development in 2026 without talking about vibe coding, the practice coined by Andrej Karpathy in February 2025 and named Collins Dictionary’s Word of the Year.

You probably know the pitch: describe what you want in plain language, let AI generate the code, accept it without deep review, iterate by prompting. “Give in to the vibes,” as Karpathy put it.

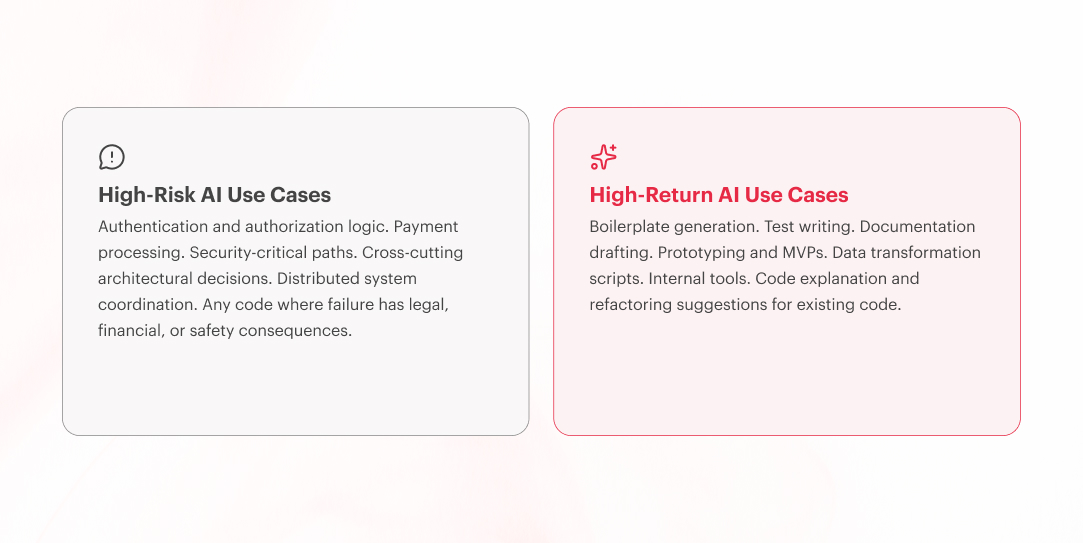

And look, for prototyping and internal tools, it genuinely works. When the cost of bugs is low and the codebase is disposable, vibe coding is transformative.

But for anything that needs to survive contact with real users, real scale, and real maintenance cycles? That’s where the dream breaks down.

An estimated 8,000+ startups that built production apps with AI now need full or partial rebuilds, at costs of $50K to $500K each.

The total cleanup bill?

Somewhere between $400 million and $4 billion.

Vibe-coded projects accumulate technical debt 3× faster than traditionally developed software. And the pattern is frustratingly predictable: the system works great until it hits real pressure, scale, integrations, data volume, and then it collapses at precisely the moment you can least afford a collapse.

The takeaway isn’t that vibe coding is useless; it’s that it has a specific, bounded role. Build the prototype in a weekend. Validate the idea. Then rebuild properly if it works. The teams that get in trouble are the ones that skip the “rebuild properly” step and ship the prototype straight to production.

Also Read: Why AI Makes Your Platform More Expensive to Maintain, Not Less

A Practical Framework: Keeping AI-Assisted Codebases Maintainable

Enough about the problem. Let’s talk about what actually works. What follows is the set of practices we apply across AI development projects at LN Webworks, and the same patterns the best-performing teams in the DORA research share. There are five layers. They reinforce each other, so skipping one makes the others less effective.

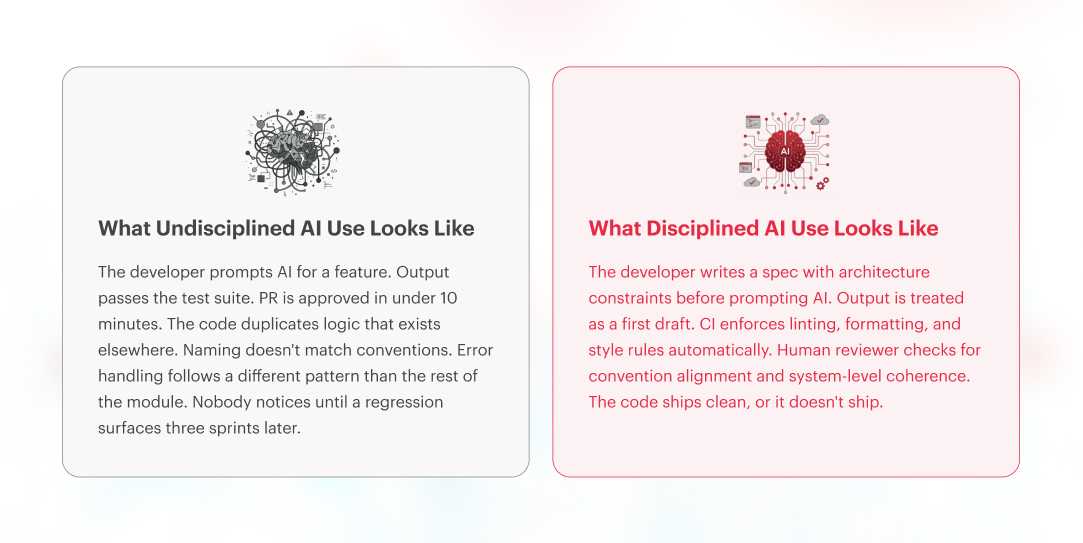

Layer 1: Specify Before You Prompt

This is the single highest-leverage thing you can do, and most teams skip it entirely. Before anyone prompts the AI, write down the architecture constraints. Define the module boundaries.

Document the conventions, even if it’s just a paragraph in the repo’s README. The AI has zero understanding of your system. Your job is to give it enough context that its output at least starts in the right ballpark.

Teams that skip this step end up spending their entire review budget undoing problems that shouldn’t have existed in the first place.

Layer 2: Automate the Non-Negotiables

Here’s a freebie: AI-generated code introduces way more formatting inconsistencies and naming violations than human-written code.

The good news?

The fix is mechanical, not heroic.

CI-enforced formatters, linters, and style rules eliminate entire categories of AI-driven issues before they even reach a human reviewer.

CI is a development practice where every time a developer writes new code and pushes it to the shared codebase, an automated system immediately runs a series of checks on that code, before any human reviews it or it goes anywhere near production.

Think of it as a filter that catches the low-hanging problems automatically, so your reviewers can focus on the stuff that actually requires judgment.

Layer 3: Review for Architecture, Not Just Correctness

Most code reviews ask: “Does this work?” For AI-generated code, the better question is: “Does this fit?”

Does it follow the conventions used by the rest of the repo?

Does it duplicate existing logic?

Does it introduce patterns that are inconsistent with the module?

Does it make the next change easier or harder?

These are the questions human reviewers need to own, because they’re exactly the ones AI can’t answer.

Layer 4: Measure What Actually Matters

Quick gut check: how does your team measure whether AI tools are working?

If the answer is PRs merged per sprint, that’s like rating a restaurant by how fast the food comes out. Speed matters, but only if the food doesn’t send people to the hospital.

The 2025 DORA Report introduced rework rate as a fifth core metric, tracking unplanned deployments caused by production issues. This is the one that catches AI quality problems that passed every gate but still broke in prod.

Beyond that, you should be tracking code churn ratios (how much is rewritten within 2 weeks of shipping) and defect density, segmented by AI-generated vs. human-written code.

Layer 5: Invest in the Human Layer

This is the layer most teams underinvest in, and honestly, it might be the most important one on this list. AI tools don’t reduce the need for experienced engineers; they increase it.

Somebody has to understand the system well enough to judge whether the AI’s output actually belongs there. Somebody has to maintain the specifications and conventions. Somebody has to make the architecture calls that the AI simply can’t.

Strong teams with good processes get stronger. Teams with shaky foundations? Their problems intensify. The tool doesn’t fix the team. The team determines whether the tool is a net positive or a liability.

It’s why our digital engineering services always pair AI tooling with senior architectural oversight, and why our UI/UX design practice emphasizes specification-first workflows that give AI tools the context they need to produce code worth keeping.

Where AI Genuinely Excels: Let It Run Here

We have spent a lot of time on the risks. Let’s balance the ledger, because there are areas where AI tools are genuinely great, and smart teams should lean into them hard.

Boilerplate and scaffolding: CRUD endpoints, config files, data transformations. AI handles these reliably. Nobody misses writing them by hand.

Test generation: AI is surprisingly good at writing tests, but most teams underuse it here. If you’re using AI for production code but not for the tests that validate it, you’re leaving the best use case on the table.

Documentation: DORA’s data shows AI produces 7.5% higher documentation quality than baseline. Let it draft your READMEs and API docs. Human review for accuracy. Move on.

Prototyping: Validate an idea? Vibe-code it. Build it in a weekend. If it works, throw it away and build the real version properly. Disposable prototypes cost nothing. Shipping a prototype to production costs everything.

Building with AI tools? Make sure the architecture holds.

Our AI development practice pairs speed with senior architectural oversight, so you ship fast without shipping fragile.

This Is a Culture Problem, Not a Tooling Problem

If you take one thing from this piece, the teams that come out of the AI coding era with clean, maintainable systems won’t be the ones who picked the best AI tool. They’ll be the ones who built the discipline to use any tool well.

Treat AI output as a first draft. Write specs before you prompt. Automate every quality gate you can. Save your humans for the judgments that require judgment. Measure quality, not just speed.

If you are unsure whether your current practices will hold up, let’s talk it through. That’s a question worth answering before the codebase answers it for you.

FAQs

Frequently Asked Questions

Why does AI-generated code create maintainability problems?

AI coding tools optimize for solving the immediate prompt, not for fitting into your broader codebase. The tools also produce 4x more duplicated code and dramatically less refactoring, creating codebases that work in isolation but become increasingly difficult to change over time.

What is vibe coding, and why is it risky for production systems?

Vibe coding is a development practice where developers describe goals in natural language and accept AI-generated code without thorough review. Research shows vibe-coded projects accumulate technical debt 3x faster, and an estimated 8,000+ startups now need partial or full rebuilds of AI-generated codebases at costs ranging from $50K to $500K each.

How should teams review AI-generated code differently from human-written code?

AI-generated code requires stricter review because it introduces more severe defects, 1.4x more critical issues, and 1.7x more major issues per pull request. Teams should enforce CI-automated linting and formatting, treat all AI output as a first draft requiring human architectural review, break AI tasks into small chunks with frequent review cycles, and track AI-attributed defect rates as a distinct engineering metric alongside traditional quality indicators.

Can vibe coding be used safely for anything?

Yes, vibe coding is genuinely effective for prototyping, internal tools, and disposable MVPs. IBM reports a 60% reduction in development time for enterprise internal apps using AI-assisted coding. The key distinction is treating vibe-coded output as disposable: build the prototype to validate the idea, then rebuild properly with architecture and conventions if it works. The teams that get in trouble are the ones that ship the prototype straight to production without the rebuild step.